Hackers have stolen more than $2B from crypto applications this year. The problem will only get worse as the crypto ecosystem grows and attracts more malicious actors. Something has to change. It’s time to take a step back, reflect on past mistakes, and change how we handle security in this industry.

In this article, I will:

- Present a framework for categorizing crypto hacks

- Outline the methods used in the most profitable hacks to date

- Review the strengths and weaknesses of the tools that are currently used to prevent hacks

- Discuss the future of security in crypto

Types of Hacks

The crypto application ecosystem is made up of interoperable protocols, powered by smart contracts, that rely on the underlying infrastructure of the host chain and the internet.

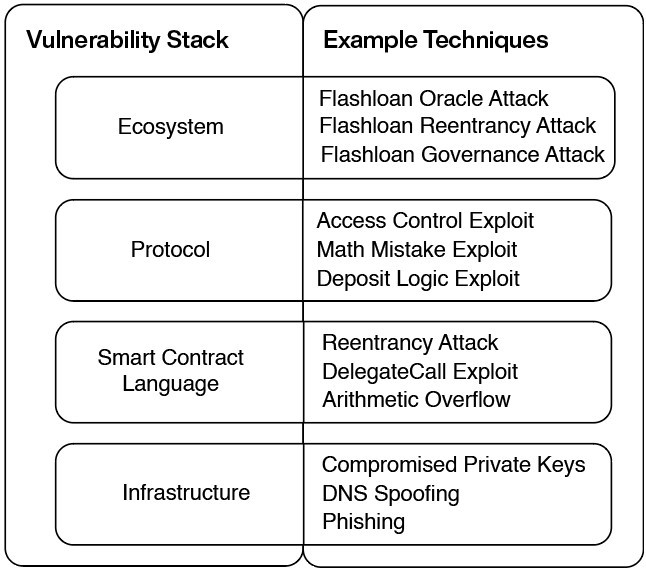

Each layer of this stack has its own unique vulnerabilities. We can classify crypto hacks based on the layer of the stack that was exploited, and the method that was used.

Infrastructure

Attacks on the infrastructure layer exploit weaknesses in underlying systems that support a crypto application: the blockchain it relies on for consensus, the internet services used for the frontend, and the tools used for private key management.

Smart Contract Language

Hacks on this layer exploit weaknesses in smart contract languages such as Solidity. There are commonly known vulnerabilities in smart contract languages, such as reentrancy* and the danger of bad delegatecall implementations, that can be mitigated by following best practices.

*Fun fact: Reentrancy, the vulnerability used to carry out the infamous $60M DAO hack, was actually identified in the security audit of Ethereum by Least Authority. Funny to think about how different things would be if that had been fixed pre-launch.

Protocol Logic

Attacks in this category exploit mistakes in the business logic of a single application. If a hacker finds a mistake, they can use it to trigger a behaviour that the app’s developers did not intend.

For example, if a new decentralised exchange has an error in the math equation that determines how much a user receives from a swap, that error can be exploited to receive more money from a swap than should have been possible.

Protocol logic level attacks can also take advantage of the governance systems put in place to control an application’s parameters.

Ecosystem

Many of the most impactful crypto hacks take advantage of the interaction between multiple applications. The most common variant is when hackers exploit logic mistakes in one protocol using funds borrowed from another to amplify the scale of the attack.

Usually, the funds used in an ecosystem attack are borrowed using a flashloan. When performing a flashloan, you can borrow as much money as you want from the liquidity pools of protocols like Aave and dYdX without putting up collateral, as long as the funds are returned within the same transaction.

Data Analysis

I collected a dataset of 100 of the biggest crypto hacks from 2020 onwards, totalling $5B in stolen funds.

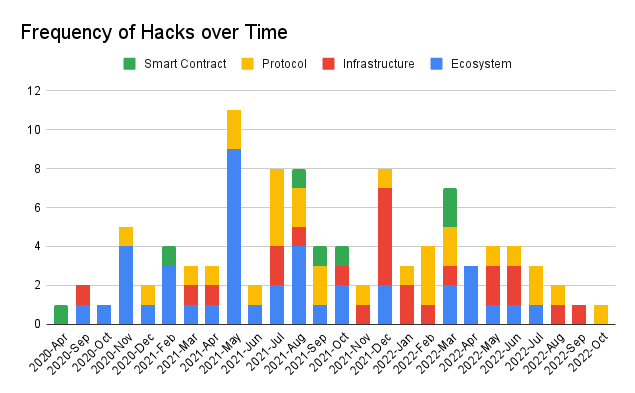

Ecosystem attacks occurred the most often. They make up 41% of the sample group.

Protocol logic exploits have resulted in the most money lost.

The three biggest hits in the dataset, the Ronin bridge attack ($624M), the Poly Network hack ($611M), and the Binance bridge hack (570M), have an outsized impact on the results.

If you exclude the top three attacks, then infrastructure hacks are the most impactful category by funds lost.

How are hacks executed?

Infrastructure Attacks

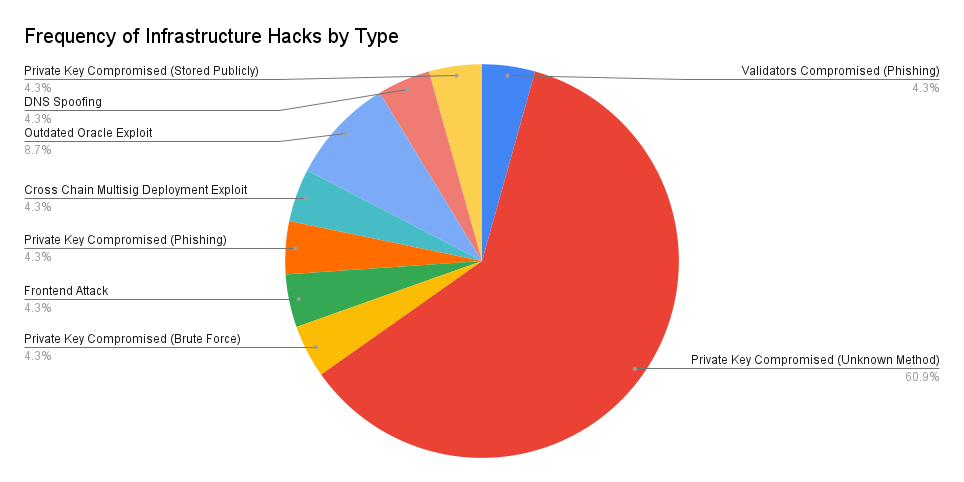

In 61% of the infrastructure exploits in the sample group, private keys were compromised by unknown means. Hackers may have gained access to these private keys using social attacks like phishing emails and fake job ads.

Reentrancy attacks were the most popular type of attack on the smart contract language level.

In a reentrancy attack, a function in the vulnerable smart contract calls a function on the malicious contact. Alternatively, a function in the malicious contract can be triggered when the vulnerable contract sends tokens to the malicious one. Then that malicious function calls back into the vulnerable function in a recursive loop before the contract updates its balance.

For example, in the Siren Protocol hack, the function for withdrawing collateral tokens was vulnerable to reentrancy and got called repeatedly (every time the malicious contract received tokens) until all the collateral was drained.

Most of the exploits on the protocol layer are unique to that specific application because every application has unique logic (unless it’s a pure fork).**

Access control mistakes were the most commonly recurring problem in the sample group. For example, in the Poly Network hack, the “EthCrossChainManager” contract had a function anyone could call to execute a cross-chain transaction.

This contract owned the “EthCrossChainData” contract, so if you set “EthCrossChainData” as the target of your cross-chain transaction, you could bypass the onlyOwner() check.

All that was left to do was craft the right message to change which public key was defined as the protocol’s ‘keeper’, seize control and drain funds. Regular users should never have been able to access functionality on the “EthCrossChainData” contract.

**Note: There have been many cases where multiple protocols got hacked using the same technique because the team forked a codebase that had a vulnerability.

For example, many Compound forks like CREAM, Hundred Finance and Voltage Finance fell victim to reentrancy attacks because Compound’s code does not check the effect of an interaction before allowing it. This worked fine for Compound because they vetted each new token they supported for that vulnerability, but the teams that made forks did not do that diligence.

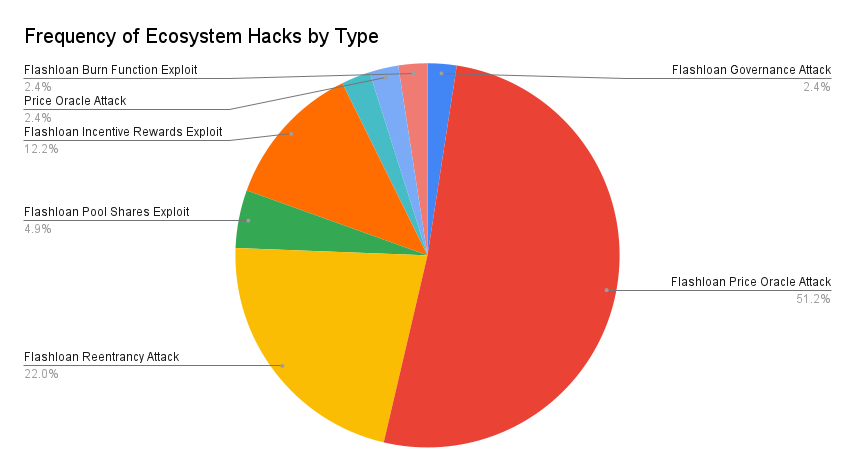

Flashloans were used in 98% of ecosystem attacks.

Flashloan attacks usually follow this formula: Use the loan to do massive swaps that drive up the price of a token on an AMM that a lending protocol uses as a price feed. Then, in that same transaction, use that inflated token as collateral to take a loan far above its true value.

When are hacks executed?

The dataset isn’t large enough to derive meaningful trends from the time distribution. But we can see that different types of attacks occur more frequently at different times.

May 2021 was the all-time high for ecosystem attacks. July 2021 had the most protocol logic attacks. December 2021 saw the most infrastructure attacks. It’s hard to tell if those clusters are coincidental or if they’re cases of one successful hit inspiring the same actor or others to focus on specific categories.

Smart contract language level exploits were the rarest. This dataset starts in 2020, when most exploits in this category were already well known and likely to be caught early.

The distribution of funds stolen over time has four main peaks. There is a peak in August 2021 that is driven by the Poly Network hack. There is another big spike in December 2021 caused by a large cluster of infrastructure hacks where private keys were compromised, for example, 8ight Finance, Ascendex and Vulcan Forged. Then, we see the all-time high in March 2022 because of the Ronin hack. The final spike is caused by the Binance bridge attack.

Where are hacks executed?

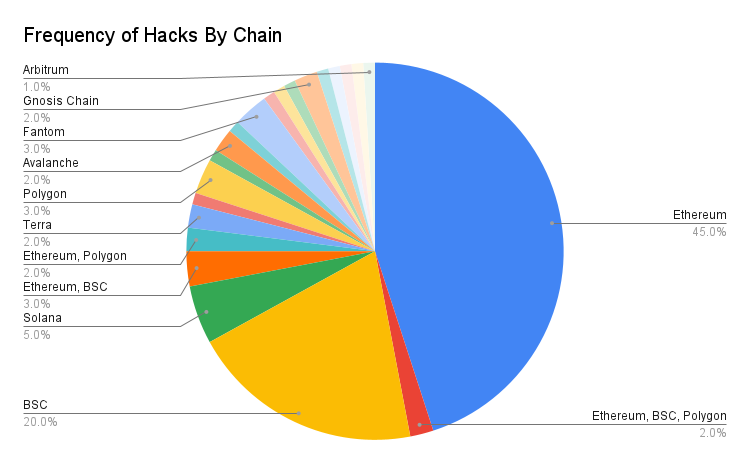

I segmented the dataset based on which chain hosted the contract or wallet the funds were stolen from. Ethereum had the highest quantity of hacks with 45% of the sample group. Binance Smart Chain (BSC) was second with 20%.

There are a number of factors causing this:

- Ethereum and BSC have the highest Total Value Locked (funds deposited in applications), so the size of the prize is larger for hackers on those chains.

- Most crypto developers know Solidity, the smart contract language of choice on Ethereum and BSC, and there is more sophisticated tooling that supports the language.

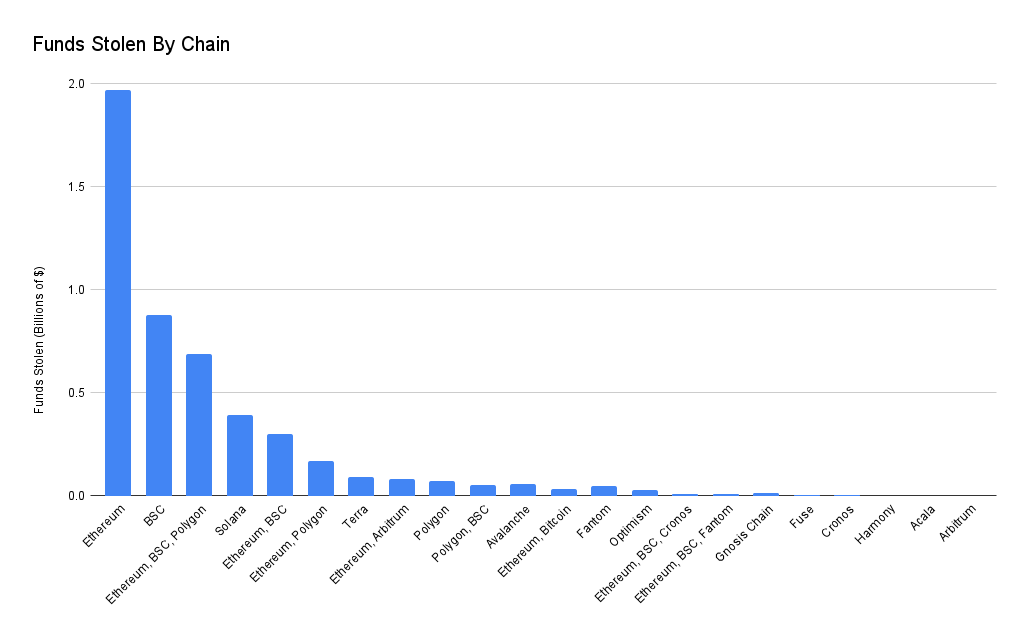

Ethereum had the highest volume of funds stolen ($2B). BSC was second ($878M). Hacks were funds were stolen on Ethereum, BSC and Polygon in a single event ranked third ($689M). This is mainly because of the Poly Network attack.

Hacks that involve bridges or multichain applications (eg. multichain swaps or multichain lending) had a huge impact on the dataset. Despite only accounting for 10% of the events, these hacks accounted for $2.52B of stolen funds.

How do we prevent hacks?

For each layer of the threat stack, there are tools that we can use to identify potential attack vectors early and prevent exploits from happening.

Infrastructure

Most big infrastructure hacks involve hackers getting sensitive information such as private keys. Following good operational security (OPSEC) practices and doing recurrent threat modelling reduces the likelihood of this happening. Developer teams that have good OPSEC processes will:

- Identify sensitive data (private keys, employee information, API keys .etc)

- Identify possible threats (social attacks, technical exploits, internal threats .etc)

- Identify loopholes and weaknesses in their existing security defenses

- Decide the threat level of each vulnerability

- Create and implement a plan to mitigate threats

Smart Contract Language and Protocol Logic

Fuzzing

Fuzzing tools, like Echidna, test how a smart contract reacts to a wide set of randomly generated transactions. This is a good way to detect edge cases where specific inputs create unexpected results.

Static analysis

Static analysis tools, such as Slither and Mythril, automatically detect vulnerabilities in smart contracts. These tools are great for quickly picking out common vulnerabilities, but they can only catch a set of predefined problems. If there is an issue with the smart contract that isn’t in the tool’s specification, it will not be seen.

Formal verification

Formal verification tool, like Certora, will compare a smart contract with a specification written by the developers. This specification details what the code is supposed to do and its desired properties. For example, a developer building a lending app would specify that every loan has to be backed by sufficient collateral.

If any of the possible behaviours of the smart contract do not meet the specification, the formal verifier will identify that violation.

The weakness of formal verification is that the test is only as good as the specification. If the specification provided does not account for certain behaviours or is too permissive, then the verification process will not catch all of the bugs.

Audit and Peer Review

During an audit or peer review, a trusted group of developers will test and review a project’s code. Auditors will write a report detailing the vulnerabilities they found and recommendations for how to fix those problems.

Having an expert third-party review contracts is a great way to identify bugs the original team have missed. However, auditors are human too, and they will never catch everything. Also, you have to trust that if an auditor finds a problem, they will tell you instead of exploiting it themselves.

Ecosystem attack

Frustratingly, despite ecosystem attacks being the most common and devastating variant, there aren’t many tools in the toolbox suited to preventing these types of exploits.

Automated security tools focus on finding bugs in one contact at a time. Audits usually fail to address how the interaction between multiple protocols in the ecosystem can be exploited.

Monitoring tools like Forta and Tenderly Alerts can give an early warning when a composability attack occurs so that the team can take action. But during a flashloan attack, funds are usually stolen in a single transaction, so any alert would arrive too late to prevent huge losses.

Threat detection models can be used to find malicious transactions in the mempool, where transactions sit before a node processes them, but hackers can bypass these checks by sending their transactions directly to miners using services like flashbots.

The future of security

I have two predictions for the future of crypto security:

1/ I believe that the best teams will shift from treating security like an event-based practise (testing -> peer review -> audit) to treating it like a continuous process. They will:

- Run static analysis and fuzzing on every addition to the main codebase.

- Run formal verification on every major upgrade.

- Set up monitoring and alert systems with response actions (pausing the entire application or the specific module that was affected).

- Dedicate some team members to making and maintaining security automations and attack response plans.

Security is not a set of checkboxes to fill and set aside. Security work should not end after the audit. There are many cases, such as the Nomad bridge hack, where the exploit was based on a mistake introduced in a post-audit upgrade.

2/ The crypto security community’s process for responding to hacks will become more organized and streamlined. Whenever a hack occurs, contributors flood into crypto security group chats eager to help, but a lack of organization means that important details can be lost in the chaos. I see a future where some of these group chats transition into more structured organizations that:

- Use on-chain monitoring and social media monitoring tools to detect active attacks quickly.

- Use security information and event management tools to coordinate work.

- Separate workstreams, with different channels for communicating about whitehack efforts, data analysis, root cause theorizing and other tasks.

Credit: This report wouldn’t have been possible without the writings of CIA Officer, Kris Oosthoek, Rekt and Emiliano Bonassi

All Comments